We hope that these new GPU builds will enable many more packages to be added to the conda-forge channel! We are already looking forward to the 2.6.2 and 2.7 releases of TensorFlow and to adding Windows support in the future. There is an open PR, but it probably needs some poking in Bazel to get it to pass.

We are still missing Windows builds for TensorFlow (CPU & CUDA, unfortunately) and would love the community to help us out with that.

With the TensorFlow builds in place, conda-forge now has CUDA-enabled builds for PyTorch and Tensorflow, the two most popular deep learning libraries. We have open-sourced the Ansible playbook in GitHub and we’re working towards making it (more) generally useful for other long-running builds! Thanks to the generous support of OVH we were able to boot multiple 32-core virtual machines simultaneously to build the different TensorFlow variants. As one can imagine, this isn’t easily possible on an average “home computer”.įor this purpose, we have written an Ansible playbook that lets us boot up cloud machines which then build the feedstock (using the build-locally.py script).

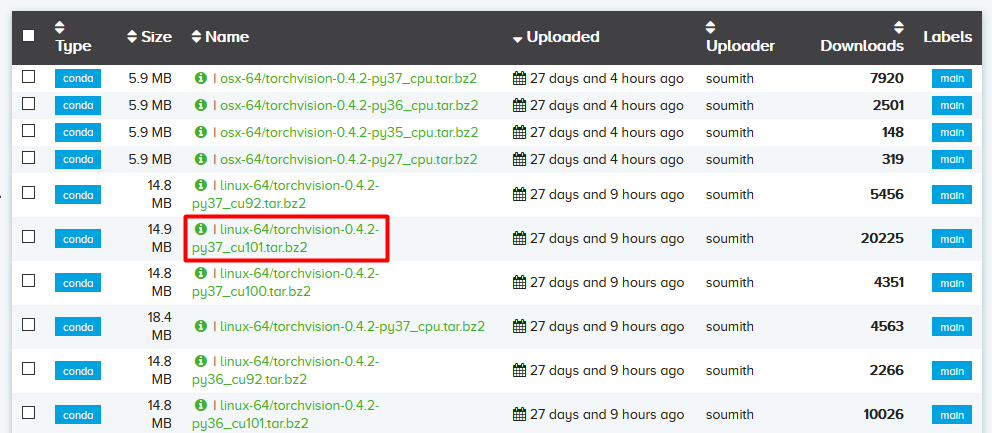

Our build matrix now includes 12 CUDA-enabled packages & 3 CPU packages (because we need separate packages per Python version). Building out the CUDA packages requires beefy machines – on a 32 core machine it still takes around 3 hours to build a single package. We now have a configuration in place that creates CUDA-enabled TensorFlow builds for all conda-forge supported configurations (CUDA 10.2, 11.0, 11.1, and 11.2+). But we managed, and the pull request got merged. Recently we’ve been able to add GPU-enabled TensorFlow builds to conda-forge! This was quite a journey, with multiple contributors trying different ways to convince the Bazel-based build system of TensorFlow to build CUDA-enabled packages. GPU enabled TensorFlow builds on conda-forge ¶ You can learn more about CUDA in CUDA zone and download it here. NVIDIA’s CUDA Toolkit includes everything you need to build accelerated GPU applications including GPU acceleration modules, a parser, programming tools, and CUDA runtime. Developers can code in popular languages such as C, C++, Python when using CUDA, and enforce parallelism in the form of a few simple keywords with extensions. The sequential portion of a function runs on the CPU in a GPU-accelerated program for optimized single-threaded performance, while the compute-intensive part, such as PyTorch code, runs parallel at thousands of GPU cores via CUDA. With CUDA, developers can dramatically increase the performance of their computer programs by utilizing GPU resources.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed